Meta: The Only AI Regulatory Body That Matters

Meta's Llama-2 LLM is the backbone of open source AI. It is used by tens of thousands of developers and researchers and exploited by criminals and adversaries, yet the usage terms ban the US DOD. Why?

Author’s note: Near term hype cycle aside, there is no question that AI will change humanity. We need to stay focused on the challenges in building LLMs and bringing them into our lives, our work (I’m working on this), and our society. We should not embrace a handful of organizations pushing for regulatory capture, or be distracted by self-proclaimed Arms Dealers of nuclear grade AI (eye roll). The opportunity and the future is too important for charlatans and unnecessary barriers.

Meta is playing a key role in preventing the Balkanization and bastardization of AI. If Meta continues its pursuit, Meta may be remembered as the driving force in realizing the greatest benefits from AI. However, Meta miscalculated/misaligned its licensing terms of its technologies, and Meta needs to fix it.

Introduction

Outside of Taylor Swift and Barbenheimer, nothing quite captured the American zeitgeist this summer like the parade of Silicon Valley CEOs, investors, and experts testifying in DC about AI and regulation. The whole spectacle had the pageantry of Taylor Swift’s Eras Tour with cameras, costumes (suits), and sound bites galore. It’s even possible that AI will one day surpass Taylor and the Swifties in terms of GDP growth.

Yet amongst the drama, speeches and never ending media coverage, very little has been said about the most important regulatory body that has shaped much of the AI world: Meta. The Meta team rocked the AI community with releasing a direct competitor to OpenAI’s ChatGPT and GPT-4. Llama came a month after OpenAI secured a $10B investment from Microsoft. In July, Meta followed up with possibly the most important AI milestones of 2023 and beyond by offering its Llama-2 LLM to everyone! It’s open source! An ecosystem formed around it with 10,000 derivatives are used by hundreds of thousands.

Forgot to mention, it is free for all to use – except for the US Department of Defense. Wait, what? The technology company that has spent the better part of the last decade battling regulatory agencies in the US and Europe, pulled a fast one and has prohibited the governments militaries from using arguably its most powerful technologies. Touché! Zuck pulled a switcheroo!

Let’s start with the fine print.

In conjunction with Meta’s smashingly successful launch of Llama-2, it launched its Community License. The license grants the great majority of the world unlimited rights to use, repurpose, distribute, Llama-2 and create derivative models. It has been a boost to AI researchers, developers, and startups and has bolstered an ecosystem of use cases and training new types of models.

The Usage Policy within the licenses is where things get a bit more interesting. Understandably, Meta boycotts usage for human or drug trafficking scenarios, violence and crime, and phishing/spam. The policy also prohibits massive companies (>700M users) from using Llama-2 (sorry TikTok, MSFT, etc). Finally, it adds a little discussed term in section 2a:

Military, warfare, nuclear industries or applications, espionage, use for materials or activities that are subject to the International Traffic Arms Regulations (ITAR) maintained by the United States Department of State.

The policy statement closes with asking violations of usage terms to be sent to an email, with no repercussions are specifically listed.

What does this practically mean?

For Meta, the policy provides legal protection for use and abuse for Llama-2 and other models. It also defines an ethical high ground for those at 1 Hacker Way and its creators who claim it is open source, and can say it does no harm.

For countries, militaries, and organizations that have a propensity for stealing IP or are engaged in illegal or criminal activity, this restriction means nothing. From propaganda creation, cyberattacks, or near perfect translation and transcription for surveillance, Llama-2 and MMS are likely part of the toolkit.

The DOD leaders (and lawyers) read the fine print and the use of Llama-2 is off the table. The ecosystem of derivatives that share some DNA with Llama-2 cannot be used. Meta’s Massively Multilingual Speech (MMS) model – Meta’s extremely powerful model for speech and translation (Community License) -- cannot be used.

Why it matters.

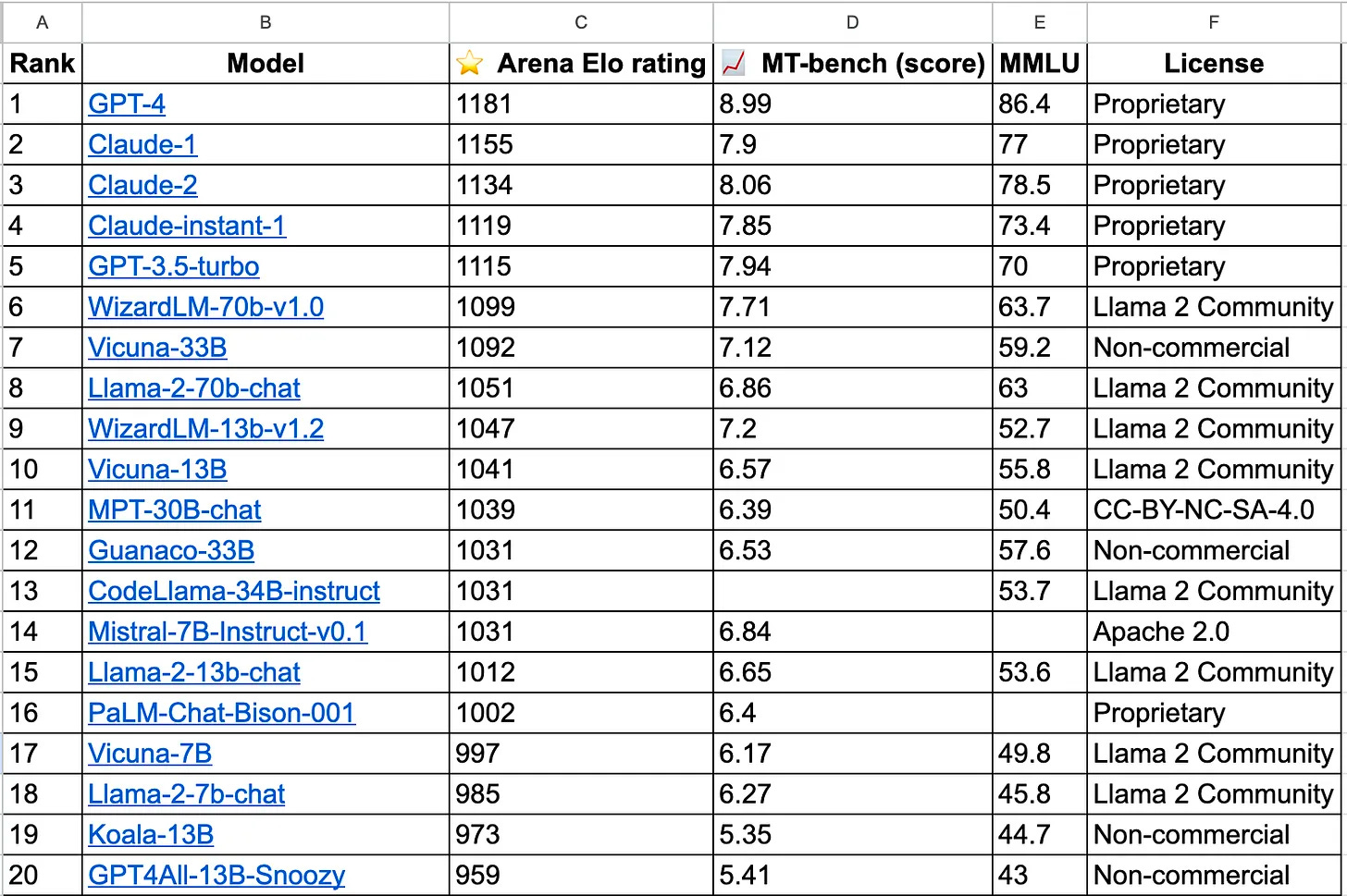

Simply put, Llama-2 is the most important driver of open source AI. In a world where a handful of vendors are seeking to lock up the space, Meta is still the best counterbalance. Of the Top 20 performing models (Table 1), 40% are tied to the Community License. From a derivatives and distribution standpoint, Llama models and the Community License are tied to are about 10,000 models with >10 million of downloads.

The major issue is that as the open source advancements continue, a larger portion of models will likely be tied to or share some DNA with the Llama models, and increasingly push out the DOD from using it as an option.

Won’t some other company just step in?

A handful of companies could certainly step in to fill the void, but there are reasons to have pause that any will be the real guiding force for the US Public Sector and specifically the DOD. I won’t personally call out any but across the options there are issues.

Cloud vendors really just want you in their cloud for use of their models, and their models/compute can become cost prohibitive or may try to be a one-model-fits-all

some companies have privately drawn a line against working with the DOD

some have said they also want to work with China

some companies have CFIUS concerns

some are fielding models that are pretty mediocre.

On the open source front, it’s worth noting that there are a few companies that have released models that carry the Apache 2.0 licensing terms and can be used by everyone. However, because of acquisitions, geographic locations, and overall direction, it’s unclear if their investments in open source LLMs will continue.

The DOD should just go build LLMs themselves?

The US Intelligence Community has stepped up for the challenge. Randy Nixon, head of the CIA’s Open-Source Enterprise, announced that the CIA is building its chatGPT model to help analysts “find the needles in the needle field.” However, it is unclear if the CIA model will be more widely available for use by the US Government and within the DOD. It also will likely be tuned for a specific set of tasks that don’t capture the broad potential DOD use cases in logistics, personnel management, training, doctrine, budgeting, etc. Finally, while it may be useful in a comparable setting, will CIAGPT continue to evolve at the pace of Meta?

In theory, the DOD certainly has the financial means to build some home grown LLMs. There are pre-existing data labeling companies and cloud compute companies working with the DOD. Access to the GPUs required to build would definitely be limited, but they could train using open source data and cloud compute, and then transfer to higher classifications. The Machine Learning Engineering talent could be a mix of DOD personnel, contractors, etc.

From an AI historical precedent, the DOD has taken matters into its own hands. Only a few years ago the DOD elected to build their own convolutional neural nets (CNNs) so it own its own computer vision models. The same may happen again for LLMs, and with the uncertainty of some tech companies and bloviated posturing of others, the DOD would understandably take things into their own hands.

But I can’t escape the fundamental question: Why force the United States to incur this cost to produce something that will possibly be worse than Llama-2 and most certainly Llama-3 (speculated for early 2024)?

Some parting thoughts for Meta.

Team Meta, more than any organization, you stepped up and nailed it. You’ve given tools to millions of students, researchers, and working professionals around the world now pushing the boundaries of AI, work, medicine, music, and life. You leveled the playing field for hundreds of other startups (including mine) building LLM-based technologies without being reliant on a few Foundational Model vendors. When others shut the door on the open source community that helped them, you busted the door back open. You understand the implications of regulatory capture on technology that cannot simply be captured. Thank you.

By ultimately deciding that the DOD cannot use Llama, Llama-2, MMS, or derivatives, you have unilaterally given adversarial nations, organizations, and criminals an advantage while not allowing our country to defend itself with the same tools. Whatever your intentions, you hamstrung people and organizations that protect your ideals and freedoms that you promote on your platform.

As you continue to advance the state of AI for all developers, companies, and the world, rethink your position, and embrace the community that protects the Community and everything you stand for. Thank you.

If you are still reading and want to learn a bit about what we’re working on for the USG at Yurts, check us out or drop me an email at ben@yurts.ai.